Why Most AI Automations Fail in Production

Steven Janiak

Founder & AI Systems Architect

Updated February 23, 2026

Most AI automations fail after launch, not during development. They work in demos, break under real-world conditions, and create more operational friction than they remove. The reason is rarely the model. It is almost always the system around it.

Key Takeaway

"Most AI automations fail not because the model is bad, but because they were never designed as production systems with deterministic logic, integration safeguards, and failure handling."

Most AI automations look impressive in demos.

Very few survive real business conditions.

They work in controlled environments. They fail when phones ring nonstop, data is messy, customers say unexpected things, and systems need to stay online without supervision.

This is not a model problem.

It is a systems problem.

The Demo Trap

Many AI tools are built to impress in short demos.

They assume:

- Clean inputs

- Cooperative users

- Perfect integrations

- Low volume

- No edge cases

Real businesses have none of those.

In production, AI systems must handle interruptions, retries, malformed data, timeouts, partial failures, and human unpredictability. Most automations are never designed for that reality.

Prompts Are Not Systems

A prompt is not infrastructure.

Prompts are probabilistic by nature. They change behavior based on phrasing, context length, and model updates. That makes them fragile when used alone.

Production systems require:

- Deterministic logic paths

- Hard constraints

- Fallback rules

- Clear failure states

- Observability

Without those layers, AI becomes a liability instead of leverage.

Integration Is Where Most Systems Break

The majority of AI failures happen between tools.

A voice assistant that books appointments is useless if:

- The CRM does not sync correctly

- The calendar API times out

- The lead data is malformed

- The call ends early

- The system retries incorrectly

Production-grade automation treats integrations as first-class components, not afterthoughts.

Volume Exposes Weak Design

Low volume hides flaws.

High volume exposes them fast.

When calls spike, leads pile up, or workflows run continuously, small design decisions become expensive problems. Systems that rely on manual cleanup or human oversight do not scale.

Production systems are designed to run unattended.

What Production-Grade AI Actually Looks Like

Production-grade AI systems are boring in the best way.

They are:

- Predictable

- Observable

- Constrained

- Integrated

- Designed for failure

They assume things will go wrong and plan for it.

AI becomes powerful when it is embedded inside a system that controls how and when it acts.

The Real Difference

The difference between AI experiments and real systems is intent.

Experiments ask, “Can this work?”

Production systems ask, “What happens when this breaks?”

At Sailient Solutions, we build AI infrastructure designed for real operations, not demos. Systems that run under load, integrate cleanly, and behave predictably.

Because in business, reliability beats novelty every time.

Steven Janiak

Founder & AI Systems Architect

Steven Janiak is the founder of Sailient Solutions, an AI and business infrastructure consultancy based in Charleston, South Carolina. He specializes in AI-driven revenue systems, CRM automation, and operational architecture for growth-stage service businesses. With over a decade of experience building high-performance web and MarTech systems, Steven focuses on practical AI implementations that drive measurable ROI.

Revenue Systems

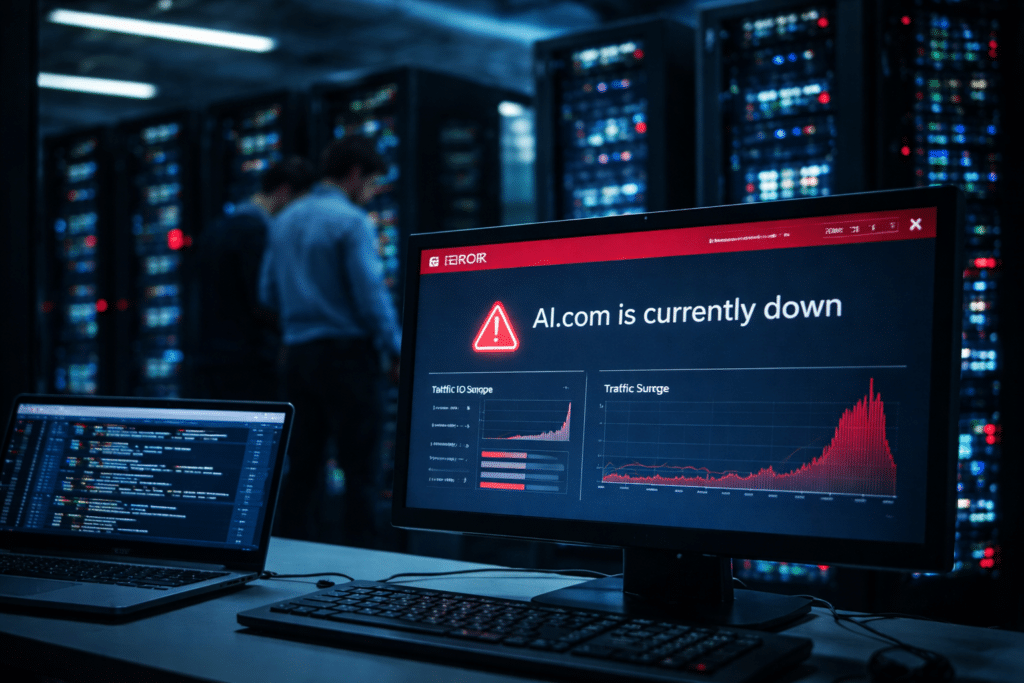

AI.com Crashed During the Super Bowl. Here’s the Real Lesson for Businesses Betting on AI

4 min read

See How This Applies to Your Business

You just read the concept. Now see what it would look like inside your business and what systems would actually make sense.

Guide delivered instantly via email